Why healthy doubt beats AI confidence theater

AI will confidently sign off on anything. The question is whether you will too.

I’m a member of my old high school’s “AI council”. My kids go there now, so I have both my own experience and my kids’ to build on when I speak in that council. Recently they asked me to speak to a group of teachers there about AI, as they’re trying to help teachers navigate what AI means for schools. The subject they asked me to speak about was a logical choice for me: the implications of AI on the workforce.

This high school is what’s called a “Gymnasium” in the Netherlands. It’s the highest form of high school education we have where they teach you, besides all the normal subjects, ancient Latin and Greek. And because of that, those mythologies and philosophies too. As I was preparing, I realized this should be a blog post too.

The term “AI confidence theater” comes from Christina Aguilera’s piece on leadership, which is worth reading. She writes about executives performing certainty about AI while privately still figuring it out. That’s real. But this post is about a different layer of the problem: the AI itself.

The confidence problem

Ask Claude, Gemini, or ChatGPT anything and you’ll get a polished, articulate, supremely confident answer. Even when it’s wrong. Especially when it’s wrong.

Socrates built an entire philosophy around “I know that I know nothing.” AI does the opposite: it knows nothing about knowing nothing. It has no concept of its own ignorance. Every hallucination arrives dressed in the same crisp suit as every correct answer.

The philosopher Hannah Arendt drew a sharp line between thinking and knowing. Knowing is accumulating facts and producing answers. Thinking is the harder thing: examining those answers, sitting with uncertainty, refusing to stop at the surface. AI is a knowing machine. It has no capacity for the other thing.

This is the core problem we’re not talking about enough: we’ve built machines that perform certainty, and we’re raising a generation that’s learning to mistake that performance for truth.

The death of average

A passable 500-word essay now costs zero effort and ten seconds. A working function in most programming languages, same story. A first-draft marketing plan, a competitive analysis, a translation. All reduced to a button press. The floor hasn’t just risen. It’s been catapulted.

What this means practically: average output has no market value anymore. The “good enough” report, the “decent” first draft, the code that merely works. AI produces all of it faster, cheaper, and more consistently than any human can. If your only skill is producing average work, you’re competing against a machine that does it at near-zero marginal cost. You will lose.

But here’s what AI can’t do: it can’t tell you whether its output is actually good. It can’t feel that something is off. It can’t exercise judgment born from years of getting things wrong and learning why. That’s the human edge. Not production, but evaluation.

The junior paradox

This leads to what might be the most uncomfortable workforce question of the decade.

You become a senior by doing years of junior work. Aristotle argued in the Nicomachean Ethics that you learn craft and virtue through practice, by doing, repeatedly, until understanding becomes instinct. There’s no shortcut. You parse hundreds of sentences before Latin grammar becomes intuitive. You write thousands of lines of bad code before architecture clicks.

But if AI handles all the junior work, where do the future seniors come from? (I wrote about the flip side of this in The death of Code Copyright and the rise of the Architect: when execution becomes a commodity, what’s left is intent, direction, and logic. The Architect is the senior who made it through. The question is how we get there without the steps in between.)

I’m already seeing this play out. Developers who call themselves “senior” because they generate code at impressive speed, but who collapse the moment they hit a bug the model can’t resolve. They never put in the hours. They skipped the reps. They have the job title without the scar tissue.

And employers are catching on. Why hire a junior you need to train for three years when AI does their job today? It’s an obvious short-term decision and a slow-motion disaster for the industry. We’re removing the very path that made senior developers possible.

The only way through: the junior work still has to happen, but the bar for what constitutes “junior” has moved up dramatically. Entry-level now means you can do what AI does plus catch where it fails. That’s a fundamentally different starting point than five years ago.

Pilot or passenger?

There’s a useful mental model here: are you the pilot using autopilot to fly better, or the passenger who doesn’t know how to land the plane when the power goes out?

The autopilot metaphor is apt because real pilots don’t trust autopilot blindly. They understand every system it controls. They monitor. They override. They train constantly for the scenarios where automation fails, because it always eventually does.

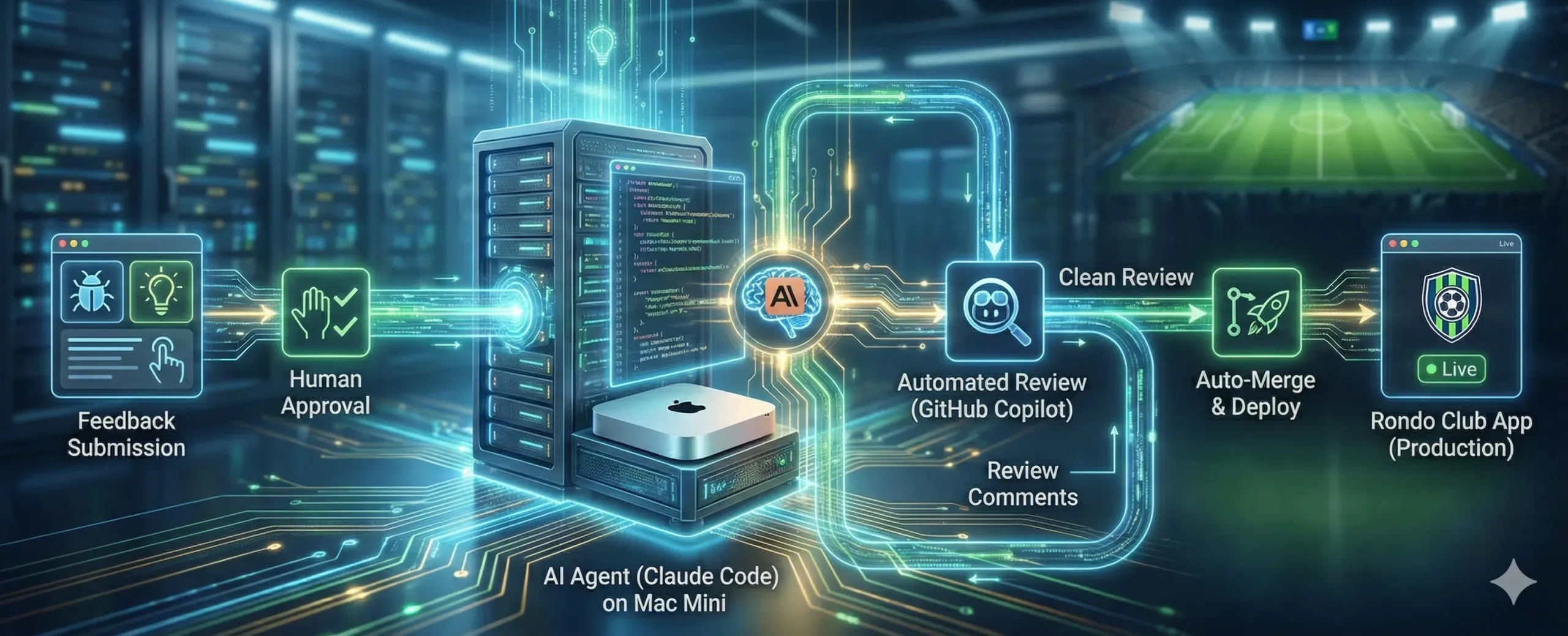

Think of AI as a very capable intern (or 20 of them, in fact). Fast, eager, able to handle enormous volumes of routine work. But you’d never let an intern sign off on a contract, publish without review, or make an architectural decision. The person with skin in the game does that. The person who lies awake when it breaks.

The market is firing passengers and hiring pilots. It’s eliminating executors and desperately seeking directors: people with the autonomy and judgment to orchestrate AI output into something that actually works in context. The Architect, in other words.

The Descartes reflex

René Descartes might be history’s first debugger. His method: break any complex problem into the smallest possible pieces, verify each one, then rebuild.

This is exactly the skill AI is trying to make obsolete, and exactly the skill that matters most when working with AI.

AI always presents a smooth surface. The text reads well. The code compiles. The analysis looks thorough. But underneath, the logic might be fractured, the sources might be fabricated, the reasoning might be circular. Without the reflex to decompose and verify, to refuse to accept something as true just because it looks true, you’re at the mercy of the machine’s hallucinations.

I think of this as the Descartes Reflex: the trained instinct to stop, take apart, and verify before accepting. It’s not paranoia. It’s intellectual self-defense.

Classical education, interestingly, has been training exactly this reflex for centuries. Parsing a complex Latin sentence is decomposition. You can’t consume it whole. You have to break it apart, identify each grammatical relationship, and reconstruct meaning from the pieces. That’s not a dusty academic exercise. It’s the single most relevant cognitive skill for the AI age.

Sapere aude in 2026

In 1784, the German philosopher Immanuel Kant wrote a short essay with one central message: stop outsourcing your thinking to authority. He called it Sapere Aude: dare to think for yourself. He aimed it at a public too comfortable deferring to church and king.

Replace “church and king” with “algorithm and model” and the message is more urgent than ever.

Every time you accept an AI answer without interrogation, you’re choosing intellectual dependency. Every time you ask “why?” and dig into the reasoning, you’re exercising the kind of autonomy that no machine possesses.

This isn’t anti-AI. I use AI every day. I write code with it roughly 20x faster than I could alone. But every line that ships is still mine. If there’s a bug, it’s my bug. That’s not a limitation, it’s the point. You can use AI to outsource the work. You can’t outsource the responsibility.

What actually needs to change

If we take this seriously, a few things follow:

For educators: Stop grading the product (unless you know it was made in a controlled classroom with no phones in sight). Start interrogating the process. Asking “what’s your answer?” is a lost game, because AI will always have an answer. Ask instead: “Why this word and not that one? What was the alternative? How do you know this is right?” Be the Socratic gadfly. The students who can survive that interrogation are the ones who’ll thrive.

For managers and founders: Hire for judgment, not output volume. The person who can tell you why the AI’s answer is wrong is worth ten people who can generate AI answers quickly. Invest in humans who think, not humans who prompt.

For anyone entering the workforce: Your value isn’t in what you produce. AI produces more, faster, always. Your value is in what you understand. In the gap between “this looks right” and “this is right.” In the willingness to say “I don’t know yet” when the machine is already confidently wrong.

For all of us: Philosophy isn’t a luxury anymore. The skills it teaches (deconstructing arguments, questioning assumptions, distinguishing valid from sound reasoning, exercising judgment under uncertainty) aren’t nice-to-haves. They’re cognitive self-defense.

The uncomfortable truth

AI makes the average worker obsolete, but the excellent worker irreplaceable.

That’s not a comfortable message. It means the stakes are higher, the climb is steeper, and “good enough” is a dead end. But it also means that genuine human capability, the kind built through struggle, failure, and hard-won understanding, has never been more valuable.

The wind is in the sails. Humans still set the course. And when it goes wrong, you can’t blame the wind.

Comments